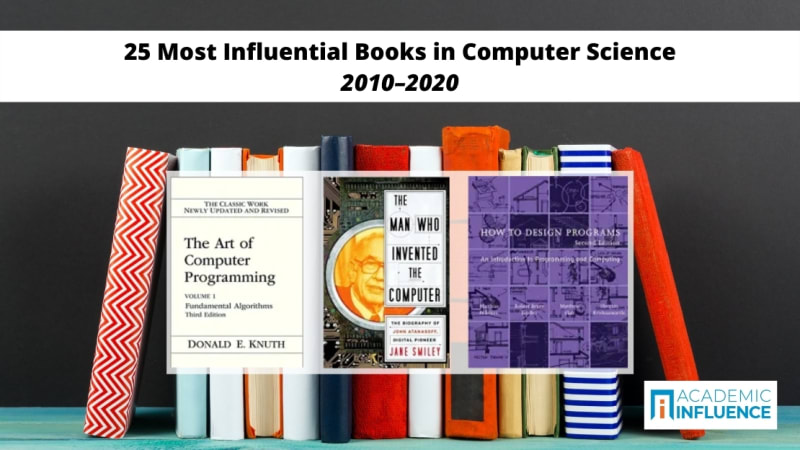

25 Most Influential Books in Computer Science 2010–2026

Computer science, as we interpret it here, encompasses both the academic study of computing systems and philosophical and sociological reflections on the impact of computers upon human society.

Key Takeaways

- Computer science is an extremely challenging field of study, and programming student will benefit from the study and reading done outside of their regular coursework. Our list of the most influential computer science books are must-reads!

- There are numerous forms of learning when it comes to programming: university courses, video-based classes (like Udacity), interactive classes (like those offered by Codecademy), and online courses, just to name a few. But computer science books can be just as helpful.

- With so many computer science books today, choosing the right books is so overwhelming. The key is to know exactly what you want to study.

This list identifies 25 books published between 2010 and 2026 that have shaped how researchers, engineers, and informed readers think about computing today. Selection emphasizes demonstrable influence—measured by sustained citation and discussion across academic literature and mainstream outlets—rather than raw sales or introductory textbook popularity. That focus highlights works that altered research agendas, introduced widely adopted methods, or reframed technical and societal debates about computing.

We intentionally exclude routine reference manuals, narrowly technical textbooks, and most fiction to emphasize books whose ideas propagated beyond narrow specialties. What follows is a concise ranking of titles whose concepts and arguments have had the clearest interdisciplinary and public impact across the last decade and a half.

The Must-read Computer Science Books

Computer science books not only teach the semantics and syntax of programming languages. These books are created to help everyone, computer science majors in particular, organize, think, and become an efficient problem solver—qualities that you should have as a computer coder. There is so much more to computer science books than learning programming languages like Java, Python, C++, and more.

Choosing the Right Computer Science Books

How do you choose the right computer science books?

Know the Technology You Want to Learn: This step is sometimes inherent, especially for programmers who already have programming skills but still need to learn something new to complete a project. Suffice it to say, there are times when even the most experienced programmers will not know what they want to learn at all.

Beginners in programming struggle to choose their first computer language. The more advanced programmers sometimes struggle to remain relevant and knowledgeable in a field that is constantly changing and evolving.

Other programmers can be a great source of information on different languages and skills are important for specific computer programming jobs. They may also be able to point you to an influential book or two that can help strengthen your knowledge base.

Beginning programmers will want to start with the basics. Find books that talk about the fundamentals of programming, data structures, and algorithms. These things are staples in any learning curriculum of computer science. Learning about computer hardware is just as important.

Evaluate the Book: When evaluating, check the table of contents to get a glance of the specific topics covered in the book. Do the topics align with your expectations? How broad (or narrow) is the scope? The formatting is also very important. When glancing through the page, do you see short paragraphs broken up with highlighted tips, lists, and diagrams? The complexity and length of the sentences are also very important. Technical books should explain concepts as simply as possible. The book is designed to present vital information in the most easy-to-understand manner.

The conversational style of the book also matters. Does the author use second-person pronouns like “you” and “your”? Does the book sound fun and a bit informal? Is the author trying to engage the readers with questions?

If these characteristics are present, then the author knows something about learning theories. The more readers are engaged in the book, the better benefit they get from what they’re reading. Finally, book reviews are also important when choosing the best computer science books. If other people can attest to the book, then most likely you can take advantage of it.

Otherwise, read on for a look at The 25 Most Influential Books in Computer Science.

25 Most Influential Books in Computer Science

By: Donald Knuth , 1962

Knuth (b. 1938) is Professor Emeritus of computer science at Stanford University in Stanford, California. Born in Milwaukee, Wisconsin, he obtained his PhD in mathematics in 1963 from the California Institute of Technology (CalTech). In 1974, Knuth won the Turing Award—the equivalent of the Nobel Prize for computer science.

The book under consideration here is a classic, but no ordinary one. An ordinary classic is a work that never gets stale. This extraordinary classic is one that is never even finished! First published in 1962 in a single volume consisting of twelve chapters, the book has gradually but continuously expanded over the intervening 60 years.

Today, The Art of Computer Programming comprises four separate volumes. The last volume is divided into two parts, only the first part of which (consisting of Fascicles 0–4) has been published so far, under the designation Volume 4A.

The second part of Volume 4—to be denominated as Volume 4B—has been announced for the near future. It will consist of an unspecified number of additional fascicles, the first of which, Fascicle 5, was published separately in 2019. More volumes may appear after that.

The topics covered by Volumes 1–4A of this authoritative and encyclopedic work include the basic concepts of fundamental algorithms, random numbers, arithmetic algorithms, sorting, searching, and combinatorics.

By: Jane Smiley , 2010

Smiley (b. 1949) is best known as a novelist. She won the Pulitzer Prize for Fiction in 1991 for her bestselling novel, A Thousand Acres, which was loosely based on Shakespeare’s play, King Lear.

This book is a biography of the American physicist, John V. Atanasoff (1903–1995). Atanasoff’s father, who was an electrical engineer, was born in Bulgaria and emigrated to the US in 1889. Born in Hamilton, New York, the son was raised mostly in Florida.

Atanasoff received his bachelor’s degree in 1925 from the University of Florida. He then earned his master’s degree in mathematics in 1926 from Iowa State College (now Iowa State University) and his PhD in theoretical physics in 1930 from the University of Wisconsin at Madison. After graduation, Atanasoff accepted a position teaching mathematics and physics back at Iowa State College.

In Atanasoff’s day, scientists used slide rules, mechanical calculating machines and tabulators to solve math problems. Atanasoff teamed up with a graduate student named Clifford Berry (1918–1963) to try to develop a new method of calculation that would be faster and more reliable than the ones available at the time. The device they came up with in 1939 at Iowa State became known as the “Atanasoff-Berry Computer” (ABC).

The ABC employed Boolean logic and binary arithmetic to solve as many as 29 simultaneous linear equations. While it did not employ a central processing unit (CPU), it did use vacuum tubes in order to perform digital computations. Thus, the ABC’s design represents one of the earliest examples of an electronic digital computer.

The ABC also made use of electrical capacitance to create regenerative memory—a process similar to today’s dynamic random-access memory (DRAM).

By: Matthias Felleisen , Robert Bruce Findler , Matthew Flatt , and Shriram Krishnamurti, 1962

Felleisen is Trustee Professor in the Khoury College of Computer Sciences at Northeastern University in Boston, Massachusetts. He was born in Germany and immigrated to the US when he was 21 years old. He obtained his PhD in 1987 from Indiana University in Bloomington and spent several years teaching at Rice University in Houston, Texas, before moving to Northeastern in 2001.

Robert Bruce Findler is a professor of electrical engineering and computer science at Northwestern University in Evanston, Illinois. He received his PhD in 2002 from Rice University, where he worked under the supervision of Matthias Felleisen.

Matthew Flatt is a professor in the School of Computing at the University of Utah in Salt Lake City. He received his PhD in 1999 from Rice University, where he too worked under the supervision of Matthias Felleisen.

Shriram Krishnamurti is a professor of computer science at Brown University in Providence, Rhode Island. He was born in Bengaluru (formerly Bangalore), the capital of the Indian state of Karnataka. He received his PhD in 2000 from Rice University, where he also worked under the supervision of Matthias Felleisen.

In the 1990s, Felleisen developed PLT (“programming language theory”), a branch of computer science that investigates the analysis, classification, design, and implementation of programming languages according to their computational features.

Felleisen is also the author of TeachScheme! (the predecessor of ProgramByDesign), a program which teaches program-design principles to beginners. Felleisen and his three former graduate students published this book in 2001 in order to make his insights available to a wider audience. A second edition of How to Design Programs was released in 2018.

By: John Palfrey and Urs Erwin Gasser 2008

Palfrey (b. 1972) received his bachelor’s degree in 1994 from Harvard College. He has earned two doctorates: a DPhil in history from the University of Cambridge (1997) and a JD from Harvard Law School (2001). Palfrey taught at Harvard Law School for many years. Today, he is president of the MacArthur Foundation.

Gasser (b. 1972) is a Swiss-born professor at Harvard Law School. He also holds visiting professorships at Keio University in Japan and the University of St. Gallen in Switzerland.

Their book is an exploration of the ways in which the thinking of “digital natives” differs from that of “digital immigrants.” A “digital native” is someone who was born after the personal computing revolution (roughly after 1980). Such persons have grown up in a world equipped with computers and have never known anything else. A “digital immigrant” is someone born substantially before 1980, whose early childhood did not include computers and who has had to learn to use them as a teenager or adult.

A revised and expanded edition of the book was published in 2016, with the subtitle “How Children Grow Up in a Digital Age.”

By: Ronald Graham , Donald Knuth , and Oren Ptashnik, 1989

Graham (1935–2020) received his PhD in mathematics in 1962 from the University of California, Berkeley. He spent much of his career at Bell Labs and AT&T Labs. Later, he taught at Rutgers University in New Brunswick, New Jersey, and the University of California, San Diego.

For Knuth’s bio, see #1 above.

Ptashnik (b. 1954) is a researcher at the Center for Communications Research in La Jolla, California. During the 1980s, he worked at Bell Labs. He received his PhD in computer science in 1990 from Stanford University, where he worked under the supervision of Donald Knuth.

Concrete Mathematics is a popular introductory textbook in computer programming written in a witty, accessible style. The book is based on a set of lectures which Knuth began delivering in 1970 at Stanford University.

It also draws on the first hundred pages or so of the “Mathematical Preliminaries” section from the first volume of Knuth’s The Art of Computer Programming. As a result, readers can use it as an introduction to that series of books.

The term “concrete” in the book’s title may be understood in two ways: (1) the math involved in the book is “concrete” in the sense that it is “applied,” as opposed to “abstract”; and (2) the title may also be construed as a contraction of the phrase “CONtinuous and disCRETE.”

A second edition of Concrete Mathematics was published in 1994.

By: Thomas H. Cormen , Charles E. Leiserson , Ron Rivest , and Clifford Stein 1990

Cormen (b. 1956) is a professor of computer science at Dartmouth College. He obtained his PhD in computer science from the Massachusetts Institute of Technology (MIT) in 1993.

Leiserson (b. 1953) is a professor of computer science at MIT. He received his PhD in computer science from Carnegie Mellon University in Pittsburgh, Pennsylvania in 1981.

Rivest (b. 1947) is an Institute Professor at MIT. He is also a member of MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL). He received his PhD in computer science in 1974 from Stanford University. In 2002, Rivest received the Turing Award for his work in computer cryptography.

Stein (b. 1965) is a professor of industrial engineering and operations research, with a cross-appointment in computer science, at Columbia University in New York City. He earned his PhD in computer science from MIT in 1992.

Introduction to Algorithms is just what its title says: an introductory textbook to algorithms used in computer science. The book covers many of the fundamental topics students will encounter in this field, including (but not limited to) the following:

- Algorithms

- Sorting and Order Statistics

- Elementary and Advanced Data Structures

- Design and Analysis Techniques

- Graph Algorithms

- Matrix Operations

- Linear Programming

- Polynomials and the Fast Fourier Transform (FFT)

- NP-Completeness

The first three persons listed above co-authored the first edition of this book, which was published in 1990. A second edition appeared in 2001, at which time Stein was added as the fourth co-author. A third edition of the book was released in 2009 and a fourth edition has been announced for 2022.

By: Stephen Wolfram , 2002

Wolfram (b. 1959) was born in London into a family of German Jewish refugees. He was a child prodigy, who published his first peer-reviewed papers in quantum field theory and particle physics at the age of 15. He received his PhD in particle physics in 1979 from the California Institute of Technology (CalTech), where his dissertation was supervised by the storied Richard Feynman.

After graduating, Wolfram joined the faculty of Caltech, before moving to the Institute for Advance Study in Princeton, New Jersey in 1983. In 1984, he was involved in the founding of the Santa Fe Institute for the study of complex systems in Santa Fe, New Mexico. Two years later, he founded the Center for Complex Systems Research (CCSR) at the University of Illinois at Urbana–Champaign.

During this period of his career, Wolfram was primarily involved in two projects: developing the theory of cellular automata and developing a new computer algebra system, called Mathematica.

In 1988, Wolfram left academia to found his own company, Wolfram Research, in order to turn his ideas into commercial reality. Wolfram Language is one of the many new products developed by Wolfram’s company.

In his first book, A New Kind of Science, published in 2002, Wolfram presents an empirical study of simple computational systems such as cellular automata. He argues that such studies are important because the universe is inherently discrete in nature, not continuous. Hence, computer simulations based on discrete mathematics are inherently better-suited to the development of predictive models of empirical reality—especially, complex systems—than the continuous mathematics of traditional physics based on partial differential equations.

Wolfram predicts that his computational approach to physics will have a revolutionary influence on physics, chemistry, biology, and, indeed, all areas of science (hence the book’s title).

By: Adam Lashinsky , 2012

Lashinsky (born c. 1967) received his bachelor’s degree in history and political science in 1989 from the University of Illinois at Urbana-Champaign. After serving as a reporter, editor, and columnist for several business, economics, and technology journals, Lashinsky was appointed Senior Editor-at-Large for FORTUNE magazine. He writes primarily about Wall Street and Silicon Valley.

The book under consideration here is both a history and an analysis of the leadership patterns, strategies, and tactics adopted by Apple Inc. during its rise to global prominence. Inside Apple places stress on the transition that occurred in 1996 when—with the company on the brink of bankruptcy—Apple co-founder Steve Jobs (1955–2011) returned to his former leadership role.

With only 90 days of cash on hand, Jobs began to turn his company around with a series of brilliantly designed and engineered products that proved to be wildly popular with the public, from the iPod, to the iPhone, to the iPad.

By the time of Jobs’s death in 2011, Apple Inc. had become the largest consumer electronics company in the world as measured by total global revenue. Today, with $274.5 billion in revenue earned in 2020, Apple remains the world’s largest technology company.

By: Hal Abelson and Gerald Jay Sussman , with Julie Sussman, 1985

Abelson (b. 1947) is Class of 1992 Professor of Computer Science and Engineering in the Department of Electrical Engineering and Computer Science at the Massachusetts Institute of Technology (MIT). He is a founder and director of both Creative Commons and the Free Software Foundation. He also directed the first implementation of the Logo programming language for the Apple II.

Gerald Sussman (b. 1947) is Panasonic Professor of Electrical Engineering at MIT. He has worked primarily in the areas of artificial intelligence (AI), programming languages, computer architecture, and Very Large-Scale Integration (VLSI) design.

Abelson and Gerald Sussman are both principal investigators with MIT’s Computer Science and Artificial Intelligence Lab (CSAIL). Julie Sussman is a computer programmer.

The book under consideration here is an introductory-level textbook in computer programming, which began life as the prescribed text in the principal authors’ classes at MIT. Presenting its material in Scheme, a dialect of Lisp, the book takes an innovative pedagogical approach. Namely, it makes use of a cast of six fictitious characters with facetious names, each of whom represents a topic or aspect of the field. For example, the character “Lem E. Tweakit” is an irate user, while “Alyssa P. Hacker” is a Lisp hacker.

A second edition of the book was published in 1996.

By: Pedro M. Domingos , 2015

Domingos (born c. 1966) is Professor Emeritus of computer science and engineering at the University of Washington in Seattle. He obtained his bachelor’s and master’s degrees in computer science and electrical engineering in 1988 and 1992, respectively, from the Instituto Superior Técnico in Lisbon, Portugal. He received his PhD in information and computer sciences in 1997 from the University of California, Irvine.

Domingos’s main field of research is machine learning. He is especially known for his work on uncertain inference, in connection with which he invented the Markov logic network.

This book advances a thesis about the nature of general or universal learning—making no distinction between human and machine learning. The thesis is that universal learning consists of five basic components:

- inductive reasoning

- connectionism

- evolutionary computation

- Bayes’s theorem

- analogical modeling

The hypothetical “master algorithm” that is the subject of this book will be a combination of algorithms embodying each of these five features. The author predicts that the master algorithm will become a reality in the near future and that it will grow rapidly in such a way as to approach asymptotically a perfect understanding of the universe and all its contents, including human beings themselves.

Once that occurs, any computer running such a well-trained master algorithm will be able to solve any problem more efficiently than any human being could.

The book was reprinted in 2018.

By: Steven Levy , 2021

Levy (b. 1951) is a journalist and book author who specializes in the subjects of computers, technology, cryptography, the Internet, cybersecurity, and privacy. He received his bachelor’s degree from Temple University in Philadelphia and his master’s degree in literature from Pennsylvania State University at State College.

Levy was formerly chief technology writer and a senior editor for Newsweek magazine and is currently Editor-at-Large for Wired magazine. He has published his work in many prominent venues, including Rolling Stone, The New Yorker, The New York Times Magazine, and Harper’s.

In addition to his journalism, Levy has published eight books including the one under consideration here. This book is a history of the Google company. Its somewhat cryptic title is a reference to the word “googolplex,” from which Google’s young founders derived their company’s name.

The book begins with the origins of the company in 1996 as a dissertation project at Stanford University undertaken by PhD students Larry Page (b. 1973) and Sergey Brin (b. 1973). The result of their brilliant efforts was an Internet search engine that significantly outperformed all others.

The book then traces the rise of Google to the position of world dominance that the company enjoys today, with some 24,000 employees and an annual operating income in excess of $40 billion. The book received very positive reviews. Reviewers emphasized Levy’s ability to make difficult technical material intelligible to a general audience.

The Jargon File

By: Eric S. Raymond , 1991

Raymond (b. 1957) is an American software developer, open-source software advocate, blogger, and author. Raymond is probably best known as the author of the bestselling 1999 book, The Cathedral and the Bazaar on the open-source movement (see #21 below).

In 1996, the author took over the curation of an online dictionary of slang terms that had been in existence for some time, which he published that year in book form as the third edition of the so-called Jargon File. Before bringing Raymond’s efforts on The Jargon File up to date, let us review its history prior to 1996.

The “Jargon File” was originated by Raphael Finkel (b. 1951) at Stanford University in 1975. In the early years, it was also referred to simply as “the File,” and after the publication of later editions, this first edition came to be referred to as “Jargon-1.”

Finkel soon passed the torch on to Don Woods (b. 1954); a little later it was picked up by Richard Stallman (b. 1953). In 1983, the latest version of the File up to that time was published under the editorship of Guy Steele (b. 1954). This book, which was titled The Hacker’s Dictionary and contained a commentary aimed at a mass market, constituted the first integral public presentation of the File.

The 1983 version of the File was based on contributions by Finkel, Woods, Stallman, Mark Crispin (b. 1949), and Geoff Goodfellow (b. 1956), but it became generally known as “Steele-83” for its general editor.

Raymond took over curation of the file and published it as it was as of 1991 under the title, The New Hacker’s Dictionary (known as “Raymond-1991”). However, the explosive growth of the Internet during the early 1990s motivated Raymond to quickly publish yet another new edition, entitled The New Hacker’s Dictionary, Third Edition, in 1996.

In 2000, Raymond released a “version 4.0” of The Jargon File. The latest incarnation of The Jargon File is a Kindle-only edition released in 2019.

By: Michael Garey and David S. Johnson, 1979

Garey (b. 1945) earned his PhD in computer science in 1970 from the University of Wisconsin at Madison. He was employed by AT&T Bell Laboratories from 1970 until 1999, where he worked in the Mathematical Sciences Research Center.

Garey specializes in computational complexity, discrete algorithms, graph theory, scheduling theory, and approximation algorithms. From 1978 until 1981 he served as Editor-in-Chief of the Journal of the Association for Computing Machinery.

Johnson (1945–2016) earned his Ph.D. in mathematics from MIT in 1973. He worked at AT&T Bell Laboratories from 1988 to 2013, where he rose to become head of the Algorithms and Optimization Department. He was also a visiting professor at Columbia University from 2014 to 2016.

A widely used and highly influential textbook, Computers and Intractability was the first book devoted exclusively to problems associated with NP-completeness. Though it is now somewhat outdated (for example, it lacks a discussion of the PCP Theorem), it remains a milestone and a classic of the field.

The reviews of this book could scarcely have been more glowing. For example, one critic wrote:

I consider Garey and Johnson the single most important book on my office bookshelf. Every computer scientist should have this book on their shelves as well. ... Garey and Johnson has the best introduction to computational complexity I have ever seen.

By: Kai-Fu Lee , 2018

Lee (b. 1961) was born in Taipei, the capital of Taiwan. In 1973, he immigrated to the US. He earned his bachelor’s degree in computer science from Columbia University in 1983 and his PhD in computer science from Carnegie Mellon University in 1988.

His doctoral dissertation consisted of the development of Sphinx, the first large-vocabulary, speaker-independent, continuous speech recognition system. He later published two technical monographs on speech recognition. Lee has spent his career in the computing industry, moving from Apple, to Silicon Graphics, to Microsoft, to Google.

In 2009 Lee resigned from Google and undertook a career as a venture capitalist. His main project has been Sinovation Ventures, a leading Chinese technology venture capital firm with offices in Beijing, Shanghai, Guangzhou, Shenzhen, and Nanjing. The stated goal of Sinovation Ventures is to create five Chinese start-ups per year in the areas of Internet businesses and cloud computing.

In this book, Lee brings his dual expertise in technology and business in China to bear on an analysis of present technological, economic, and political trends and where they are likely to lead. Lee makes many startling and controversial observations in this book. For example, he observes that “If data is the new oil, then China is the new Saudi Arabia.” He also praises China for subsidizing and according high status to the AI industry.

Another controversial section of the book explores the future impact that AI is likely to have on the nature of work available to the mass of the population in American and elsewhere.

By: Marvin Minsky , 1986

Minsky (1927–2016) was born and raised in New York City. After a tour of duty in the US Navy during World War II, he received his bachelor’s degree in mathematics in 1950 from Harvard University and his PhD in mathematics in 1954 from Princeton University.

Minsky joined the faculty of MIT in 1958 and remained there throughout his career. Together with John McCarthy (1927–2011), he co-founded what is known today as the MIT Computer Science and Artificial Intelligence Laboratory.

Minsky is a seminal figure in the field of artificial intelligence (AI). His 1969 book Perceptrons, co-authored with Seymour Papert (1928–2016), is considered a foundational document in the history of machine learning based on neural networks. The same year that the pathbreaking book Perceptrons was published, Minsky was awarded the prestigious Turing Award.

The Society of Mind covers a very wide range of topics, from language, memory, and learning to consciousness, the self, and free will. For this reason, it is as much a work of philosophy as a computer science text.

In this book, the author presents his own model of human intelligence step by step. Minsky’s basic idea is that natural human intelligence is built up from the interactions among simple, mindless parts, which he calls “agents” (an unfortunate word choice, since in ordinary speech “agents” are themselves intelligent).

Minsky then describes the result of the interactions among these myriad sub-intelligent “agents” as a “society of mind”—his term for intelligence, whether natural or artificial.

By: Nick Bostrom , 2014

Bostrom (b. 1974) was born as Niklas Boström in Helsingborg, Sweden. He received his bachelor’s degree in philosophy, mathematics, and artificial intelligence from the University of Gothenburg in 1994. Bostrom then earned two master’s degrees, one in philosophy and physics from Stockholm University and the other in computational neuroscience from King’s College London, both in 1996. Finally, Bostrom received his PhD in philosophy from the London School of Economics in 2000.

In 2005, Bostrom founded the Future of Humanity Institute (FHI) at the University of Oxford. FHI explores the far future of human civilization. Bostrom is also associated with the University of Cambridge’s Centre for the Study of Existential Risk.

After graduating, he briefly taught at Yale University in the US and then occupied a Postdoctoral Fellowship back at the University of Oxford. After leaving academia, Bostrom made his living as a freelance writer. He has published four books and some 200 peer-reviewed academic papers.

Bostrom writes on many topics, but one of his main fields of interest is artificial intelligence and the threat it poses to humanity’s future. The book under consideration here is his most in-depth discussion of this theme. The “superintelligence” referred to in the title is a kind of general intelligence far exceeding that of human beings, with which the author claims computers and robots will be equipped at some point in the future.

The book offers an accessible account of the technical issues underlying artificial intelligence and its philosophical interpretations. But the author does more than merely explain these matters. He also considers what changes in political organization might be required for humanity to effectively protect itself from the threat he sees as being posed by the advent of superintelligence.

The book was reprinted in 2016.

By: Daniel H. Wilson , 2005

Wilson (b. 1978) was born in Tulsa, Oklahoma. He is a member of the Cherokee Nation. Wilson earned his bachelor’s degree in computer science in 2000 from the University of Tulsa. As an undergraduate, he spent a semester abroad studying philosophy at the University of Melbourne.

Wilson completed a double master’s degree program, one in machine learning and one in robotics, as well as a PhD program in robotics, all in 2005 from Carnegie Mellon University’s Robotics Institute in Pittsburgh, Pennsylvania.

Despite his sterling academic credentials, Wilson did not pursue an academic career, but rather has made his living as a freelance writer. Wilson has published six novels, one short story collection, a graphic novel, and four comic books. He has also authored or co-edited seven works of non-fiction, including the book under consideration here.

How to Survive a Robot Uprising was optioned by a Hollywood producer in 2005, Wilson’s last year in graduate school. Although the film has not yet been made, this experience led to Wilson’s involvement with movies.

He has gone on to write two screenplays himself based on his own novels. Two more of his novels have also been optioned, as well as one short story. The latter is his only film project to be produced so far. The Nostalgist was directed by Giacomo Cimino and premiered at the Palm Spring International Shortfest in 2014.

This book is a tongue-in-cheek satire in the form of a how-to manual. With abundant scientific detail, it explains how to survive in a world in which superintelligent robots (see #16 above) have rebelled against their human masters. The effectiveness of its dark, deadpan humor derives from its subtle exaggeration of scientific facts beyond the bounds of reasonableness.

The Emotion Machine: Commonsense Thinking, Artificial Intelligence, and the Future of the Human Mind

By: Marvin Minsky , 2006

Late in his life, Minsky (see #15 above) wrote a second book to update and clarify the ideas he expressed in his earlier book, The Society of Mind, published in 1986.

In the new book, Minsky basically tries to integrate the emotional or affective dimension of natural human intelligence (or “common sense”) into his earlier “society of mind” theory of intelligence as the resultant effect arising from the interactions among myriad unintelligent “agents.”

In a nutshell, Minsky downplays the distinctiveness of affectivity, arguing that the various emotions are simply “ways to think” about the different classes or types of problem situations that brains encounter in their interaction with the world.

He further claims that the brain employs “rule-based mechanisms” embodying “selection rules” (basically, algorithms) in order to “turn on” the appropriate emotions when a brain is faced with a specific kind of problem situation.

The author also uses his new book to review the achievements of AI, explaining why modeling natural intelligence, whether human or artificial, is so difficult.

Finally, Minsky considers such fundamentally philosophical questions as whether artificial brains embodied in computers and robots will really be able to think and, if so, what their experiences—their pleasures, sufferings, and so on—might be like.

By: Andrew S. Tanenbaum and Herbert Bos , 1992

Tanenbaum (b. 1944) was born in New York City and grew up in White Plains, New York. He received his bachelor’s degree in physics in 1965 from MIT and his PhD degree in astrophysics in 1971 from the University of California, Berkeley.

Tanenbaum is currently Professor Emeritus of Computer Science at the Vrije Universiteit Amsterdam (Free University of Amsterdam) in the Netherlands. He was a co-founder and served as the first Dean of a Dutch academic consortium known as the Advanced School for Computing and Imaging, with faculty from Vrije Universiteit Amsterdam, Delft University of Technology, and Leiden University.

Tanenbaum is perhaps best known as the inventor of MINIX, a free, Unix-like operating system for teaching. He is also well known for a famous 1992 debate with Linus Torvalds (b. 1969) regarding Usenet. In 2004, he founded the web site electoral-vote.com. Finally, Tanenbaum has advised an unusually large contingent of graduate students during his career at the Vrije Universiteit Amsterdam, many of whom have gone on to distinguished careers in computer science.

Herbert Bos is currently a full professor of computer science at the Vrije Universiteit Amsterdam. He received the degree of Ingenieur in computer science in 1994 from the University of Twente in Enschede, the Netherlands, and his PhD in computer science in 1999 from the University of Cambridge’s Computer Laboratory.

Bos joined Tanenbaum as co-author of Modern Operating Systems for the fourth edition published in 2014. The book under consideration here is a direct descendent of the book, Operating Systems: Design and Implementation, a textbook that Tanenbaum first published in 1987. The new book is basically the same as the old one, with the material relating to implementation omitted.

The book is written in autonomous C language and covers the fundamentals of Minix and other operating systems. The book describes many scheduling algorithms. Now in its fourth edition, Modern Operating Systems has proven to be a very popular textbook worldwide.

By: Donald Knuth , 2001

Often referred to as the “Father of Computer Science,” Donald Knuth (see #1 and #5 above) is uniquely qualified to comment on the larger philosophical significance of computer science.

With this book, Knuth has given us the benefit of his unparalleled experience and wisdom concerning the connection between computer technology and religion.

Among the large questions Knuth addresses in this remarkable book are:

- the relationship between computation and infinity

- the bearing of probability theory on free will

- the place of mathematics in one’s personal understanding of the sacred

The book began life as a series of lectures delivered at MIT in 1999 on the topic of computers and religion. The following is a list of the lecture titles, which also makes up the published book’s table of contents:

- Lecture 1: Introduction

- Lecture 2: Randomization and Religion

- Lecture 3: Language Translation

- Lecture 4: Aesthetics

- Lecture 5: Glimpses of God

- Lecture 6: God and Computer Science

For the book, Knuth added an additional concluding section entitled “Panel: Creativity, Spirituality, and Computer Science.”

One reviewer, writing in the immediate aftermath of the lectures in 1999, summed up Knuth’s work with the following headline:

“Computer God Speaks About God, Computers”

By: Eric S. Raymond , 1999

In this book, Raymond (see #12 above) recounts his experience as a developer of the Linux kernel and a manager of the open source project known as fetchmail. He uses his own personal history as a backdrop for reflections on the eternal struggle between top-down and bottom-up approaches to system design.

The title of the book is based on the symbols of the medieval cathedral as an example of a top-down (centralized, goal-directed) system and of the bazaar (or market) as an example of a bottom-up (distributed, self-organizing) system.

The book grew out of a paper the author first presented at the Linux Kongress in 1997 in Würzburg, Germany. In 1999, the book was both published in English and self-published in German as Die Kathedrale und der Basar.

The book also advances many ideas concerning the best way to practice operating system design, including the following assertions:

- Good software flows from a programmer’s personal interests and commitments

- Rewriting and beta testing are essential to the process

- Intelligently designed data structures are more important than coding

- The next-best thing to having your own good ideas is recognizing your users’ good ideas

- Innovation often lies in reconceptualizing the problem

- Simplify as much as possible

- A good tool should do what it is intended to do; a great took does unanticipated things

By: Ray Kurzweil , 1999

Kurzweil (b. 1948) was born and raised in New York City. He obtained his bachelor’s degree in computer science and literature in 1970 from MIT, where he was a student of Marvin Minsky’s (see #15 and #18 above).

While still an undergraduate at MIT, Kurzweil founded a company which used a program he had written to match high school students with potential colleges. He soon sold this company for three-quarters of a million dollars in today’s money.

In 1974, Kurzweil used his profits to start another company, Kurzweil Computer Products, Inc. One of the new company’s first products was first omni-font optical character recognition (OCR) system—a computer program capable of recognizing text written in any normal font. This product was immensely successful. Among the company’s many OCR-related products is the Kurzweil Reading Machine for the blind.

In 1984, Kurzweil founded Kurzweil Music Systems, whose first product was Kurzweil K250, a vastly improved electronic music synthesizer. Tests showed that trained musicians were unable to distinguish between sounds produced by a Kurzweil K250 set on piano mode and those produced by a real grand piano.

During the late 1980s and 1990s, Kurzweil founded several new companies, including one in the education sector, which combined his previously developed OCR capabilities with new pattern-recognition technologies to help people with disabilities such as blindness, dyslexia and attention-deficit hyperactivity disorder (ADHD) with their school work.

Beginning around 1990, and at increasing pace after 2000, Kurzweil turned his attention to writing projects, mainly on the topics of computer-human interaction and futurism, more generally. Altogether, he has written seven nonfiction books, including the one under consideration here, as well as one novel.

In a nutshell, Kurzweil argues in this book that continuous improvements in the intelligence of computers must inevitably lead to machines with human-like and, eventually, more-than-human capabilities, including the emergence of subjective consciousness (hence the book’s title). Kurzweil dubs this event “the singularity,” meaning the moment when computers pass a point of no return, when it will no longer be possible for human beings to control them.

The Age of Spiritual Machines is a mixture of solid computer science, reasonable speculation about the future development of artificial intelligence, controversial philosophy, and dubious claims about the future. The book attracted a large amount of attention from various intellectual communities, from academic computer scientists and philosophers to the fantasy and science fiction community and the general reading public.

An anthology of essays on this book—entitled Are We Spiritual Machines?: Ray Kurzweil vs. the Critics of Strong A.I. and edited by Jay W. Richards—was published in 2001. It contained contributions by Kurzweil and the distinguished philosopher, John Searle (b. 1932), among others.

In addition to Searle, many other philosophers have weighed in on The Age of Spiritual Machines, as well as its sequel, The Singularity is Near (2005). In general, one may say that the computer science community’s view of Kurzweil’s work has been far more favorable than that of philosophers.

By: Frederick P. Brooks, Jr., 1975

Brooks (b. 1931) was born in Durham, North Carolina. He obtained his bachelor’s degree in physics in 1953 from Duke University, located in his hometown. He then received his PhD in applied mathematics (computer science) in 1956 from Harvard University in Cambridge, Massachusetts.

In 1956, Brooks went to work for IBM, where he contributed to the development of several new computer systems before being appointed to lead the development of the IBM System/360 family of computers and the OS/360 software package. During this time period, he invented the phrase “computer architecture” to describe the design of operating systems.

In 1964, Brooks accepted a position with the University of North Carolina at Chapel Hill, where he spent the rest of his career. He won the coveted Turing Award in 1999.

Brooks’s book is about the various aspects of project development scheduling. It advances the thesis that the concept of a “man-month”—the theoretical amount of work done that can be performed by one person in one month—is not a useful metric with which to measure progress in the field of computer software engineering.

This book has been widely read and discussed. Its main idea was summed up by Brooks with the catchy phrase “adding manpower to a late software project makes it later.” In this form, it became famous as “Brooks’s Law.”

Brooks once remarked that more people cited his “law” than obeyed it, saying he should have called his book, The Bible of Software Engineering, because “everybody quotes it, some people read it, and a few people go by it.”

A second edition of the book was published in 1982, while a third, twentieth-anniversary edition was published in 1995. The twentieth-anniversary edition contained an appendix featuring a famous essay Brooks had written in 1986 entitled “No Silver Bullet—Essence and Accident in Software Engineering.”

By: William Cheswick , Steven M. Bellovin , and Aviel D. Rubin 1994

Cheswick (born c. 1953) received his bachelor’s degree in fundamental science in 1975 from Lehigh University, in Bethlehem, Pennsylvania. He worked for several companies, including Computer Sciences Corporation, before joining AT&T Bell Labs in 1987, where he and Bellovin developed the first computer network firewall.

Bellovin (born c. 1950) earned his bachelor’s degree in 1972 from Columbia University in New York City and his PhD in 1982 from the University of North Carolina at Chapel Hill. He worked for AT&T Bell Labs from 1982 until 2004. Since 2005, he has been a professor of computer science at Columbia University.

Rubin (b. 1967) received his bachelor’s, master’s, and PhD degrees in computer science and engineering in 1989, 1991, and 1994, respectively, all from the University of Michigan at Ann Arbor. He is currently Professor of Computer Science at Johns Hopkins University in Baltimore, Maryland.

This book recounts the development of the first computer network firewall by Cheswick and Bellovin at AT&T Labs in the 1980s. Their work helped define the concept of a firewall and heavily influenced the later formation of the perimeter security model, which during the mid-1990s became the dominant network security architecture.

Cheswick and Bellovin authored the first edition alone. They were joined by Rubin as co-author for the second edition, published in 2003.

By: John von Neumann , 1958

Von Neumann (1903–1957) was born and raised in Budapest, Hungary, into a middle-class, non-observant Jewish family. He was a child prodigy who at the age of six could divide eight-digit numbers in his head and converse in ancient Greek.

At the age of 19, von Neumann published a mathematics paper which gave the modern definition of ordinal numbers, superseding the definition advanced by Georg Cantor (1845–1918) that had been current up until that time.

Von Neumann pursued two courses of graduate studies simultaneously, earning a diploma in chemical engineering from ETH Zurich and a PhD in mathematics from Pázmány Péter University in Budapest, both in 1926. He then studied with David Hilbert (1862–1943) at the University of Göttingen, where he completed his Habilitation in 1927.

After finishing his formal studies, von Neumann concentrated on mathematics. By the end of 1929, he had published over 30 ground-breaking papers—achieving an incredible pace of more than one a month!

After briefly working as a Privatdozent at the Universities of Berlin and Hamburg, in 1930 von Neumann received an offer to join the Institute for Advanced Study (IAS) in Princeton, New Jersey. Three year later, the IAS offered von Neumann a lifetime professorship.

During World War II, von Neumann was recruited for the Manhattan Project and made important contributions to the development of the first atomic bomb.

Von Neumann made major contributions to an amazing number of fields of mathematics and science, of which the following are only some of the best known:

- Pure Mathematics

- Set theory

- Proof theory

- Ergodic theory

- Measure theory

- Functional analysis

- Topological groups

- Operator algebras

- Geometry

- Lattice theory

- Physics

- Foundations of quantum mechanics

- Von Neumann Entropy

- Quantum mutual information

- Density matrices

- Quantum logic

- Mathematical Statistics

- Fluid dynamics

- Social Science

- Game theory

- Economics

- Linear programming

- Computer Science

- Merge/sort algorithm

- Computer architecture

- Cellular automata

- Artificial intelligence

Von Neumann began to take an interest in the theory of computation even before World War II. He worked briefly with the father of computer science, Alan Turing (1912–1954), when the latter visited the IAS in the late 1930s.

After the war, von Neumann became deeply involved in the design and implementation of the first electronic digital computers. Specifically, he worked closely on the EDVAC (Electronic Discrete Variable Automatic Computer), which was the world’s first computer to be based on binary arithmetic (the earlier ENIAC had still been based on the decimal system). EDVAC was built at the Ballistic Research Laboratory at Aberdeen Proving Ground, a US Army installation in Maryland, between 1944 and 1949.

Von Neumann’s second major computer project was the IAS Machine, built at the IAS between 1945 and 1951 under his supervision and utilizing an architecture designed by him, now known as the “von Neumann architecture.”

Finally, von Neumann, who was universally known to his American friends as “Johnny,” was playfully immortalized by the RAND Corporation in their machine, the “Johnniac,” which copied the IAS Machine’s architecture and ran continuously from 1953 until 1966. The tongue-in-cheek nickname “Johnniac” supposedly stood for “John von Neumann Numerical Integrator and Automatic Computer.”

The book under consideration here was originally intended for Yale’s prestigious Silliman Lecture series, but von Neumann did not live to deliver the lectures or this posthumously published book, which is based on his unfinished lecture notes.

This book was an early and important contribution to the computational theory of the mind. It argues that the brain must be a kind of digital computer, though one with many features that surpass the technology of Von Neumann’s day. The author speculates about the directions in which computers would have to develop to achieve the full capabilities of the human brain.

A second edition of the book was published in 2000, while a third edition was published in 2012.

For a look at some of the most influential books in data science, a field that is closely related to computer science, check out Data Science Tools and Trends and Books for Data Science

Now that you know what books to check out, consider a deep dive with a look at The Best Colleges & Universities for a Bachelor’s in Computer Science.

Visit our Study Guide Headquarters for tips, tools, and much more.

See our Resources Guide for much more on studying, starting your job search, and more.